Local Collision Avoidance for Unmanned Surface Vehicles based on an End-to-End Planner with a LiDAR Beam Map

Image credit: Unsplash

Image credit: Unsplash

Abstract

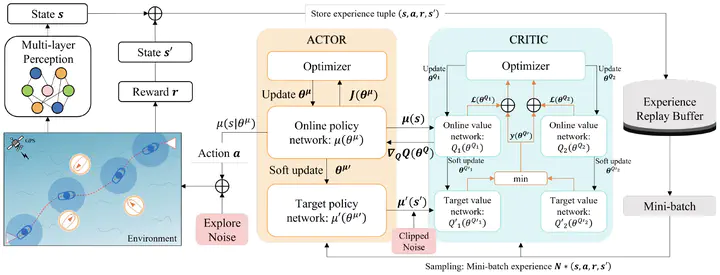

Collision avoidance is critical for ensuring the safe navigation of unmanned surface vehicles (USVs). This paper presents an end-to-end solution for local path planning of USVs, focusing on enhanced obstacle evasion and smoother navigation. By leveraging deep reinforcement learning (DRL), we enable direct translation of relative distance states into navigational actions, eliminating the need for cumbersome map maintenance and complex feature extraction. A novel observation modality, the beam map, is designed to accurately perceive obstacles in all directions, mimicking the functionality of an onboard LiDAR system. To further refine collision avoidance maneuver, a warning zone is introduced, adjusting the agent’s sensitivity to obstacles and allowing ample time and space for decision-making. Additionally, we propose a continuous-time short-distance constraint to calculate the International Regulations for Preventing Collision at Sea (COLREGs) adherence rewards, enabling legal and rational navigation without requiring prior knowledge of the encounter situation. Extensive experimental results, comparing various RL policies and classical methods, demonstrate the planner’s exceptional obstacle avoidance capability and adaptability to changing environments. Using real-world inland ship navigation data, four steering scenarios are designed to further validate the efficacy of the proposed method.